The 2026 AI Roadmap: From Prompting to Building Autonomous Systems

A forward-looking guide that prioritizes "Agentic AI" and RAG (Retrieval-Augmented Generation) over traditional algorithm training, aimed at career switchers and tech enthusiasts.

The Future of Learning is Agentic

By 2026, the barrier to entry for AI has completely changed. You no longer need to spend months learning the nuances of stochastic gradient descent just to build a chatbot. Instead, the focus has shifted toward orchestration—the ability to connect powerful existing models to your own data and systems.

If you’re starting today, here is your non-negotiable roadmap for the year.

Phase 1: The Modern Foundation

Forget the 400-page math textbooks. Start with Python 3.x. It remains the "language of AI," but your focus should be on using it to interact with APIs.

Phase 1 is the most critical stage of your journey. In 2026, "Foundations" no longer means memorizing every Python library; it means building computational literacy—the ability to understand how data moves through a system and how to orchestrate that flow using code.

1. Advanced Python for AI Orchestration

By 2026, Python remains the "lingua franca" of AI, but the way we use it has changed. You must move beyond basic syntax to master Asynchronous Programming and Type Safety.

Asynchronous Python (

asyncio): AI agents often wait for API responses from models like GPT-4 or Claude. Learning to writeasynccode allows your system to handle hundreds of these requests simultaneously without crashing.Data Validation with Pydantic: When an AI model sends you data, it’s often "messy." You must use Pydantic to enforce strict data structures, ensuring your code doesn't break when a model returns unexpected text.

Modern Environment Management: Ditch old tools for uv or Ruff. These 2026-standard tools manage your projects at "Rust speed," making your development workflow 10-100x faster than traditional methods.

Primary Resources:

Harvard CS50 Python: The gold standard for learning modern, clean Python coding habits.

Master Python in 2026 - SkillAI: A specialized roadmap focusing on async, type hints, and modern libraries.

2. The "Essential" Math (Strip the Fluff)

You do not need a PhD, but you must understand the "Three Pillars" to prevent your AI models from being "black boxes".

Linear Algebra (The Language of Tensors): AI models process information as large grids of numbers (matrices). You must understand Matrix Multiplication and Dot Products to understand how data is transformed.

Calculus (The Engine of Learning): Specifically, master Partial Derivatives and the Chain Rule. These concepts explain "Backpropagation"—the process by which an AI looks at its mistakes and corrects them.

Statistics (The Guardrail of Truth): Learn Bayesian Statistics and Probability Distributions. In 2026, AI is probabilistic, not certain. You need stats to know when an AI is "hallucinating" versus when it's confident.

Primary Resources:

Khan Academy Calculus/Linear Algebra: The best free resource for conceptual clarity.

Mathematics for ML and Data Science (Coursera): A high-production-value course that applies math directly to AI problems.

3. The 2026 Data Stack

Data is the "fuel" for AI. In 2026, the standard has shifted from just "storing" data to "preparing" it for models.

Polars (The New Pandas): While Pandas is still used, Polars is the 2026 favorite for high-performance data manipulation because it is written in Rust and uses "lazy evaluation" to optimize queries before they even run.

NumPy (The Mathematical Foundation): This is the core library that underpins almost every AI tool. You must be comfortable with Broadcasting and Vectorization—techniques that allow Python to process millions of calculations at once.

Structured Query Language (SQL): AI often lives on top of databases. Learning to write complex SQL joins is still a non-negotiable skill for any AI engineer.

Primary Resources:

Python for Data Science Full Course 2026: A complete, free guide to NumPy, Pandas, and Matplotlib.

Polars Documentation: The official guide for mastering the fastest data library in the ecosystem.

Dive deeper into Phase 1 with these comprehensive roadmap and tutorial videos for 2026:

Python Roadmap For Beginners 2026 | How To Learn Python ..., YouTube · Simplilearn · 1970 M01 1

Python Full Course for Beginners to Advanced with AI (2026), YouTube · The iScale · 1970 M01 1

Correct Order to Learn AI Complete Roadmap for Beginners ..., YouTube · PythonLife · 1970 M01 1

The Math Needed for AI/ML (Complete Roadmap), YouTube · Harry Connor AI · 1970 M01 1

Applied Data Science With Python Full Course 2026 [Free ..., YouTube · Simplilearn · 1970 M01 1

Code: Can you write an

asyncPython script that validates data with Pydantic?Math: Do you conceptually understand how a Derivative tells a model to change its "weights"?

Data: Can you use Polars or NumPy to clean a messy dataset of 1 million rows in seconds?

Would you like a specific 4-week study schedule to master these Phase 1 skills?

Phase 2: Master the "XML Sandwich" & Advanced Prompting

Prompting is no longer just "asking a question." In 2026, it's about System Instructions.

Technique: Learn Chain-of-Thought (prompting the AI to explain its logic) and Few-Shot Prompting (providing examples). These are the keys to making AI reliable enough for professional use.

In 2026, Phase 2 is where you transition from a coder to a Context Architect.

By this stage, you aren't just "talking" to an AI; you are engineering the high-fidelity information environment that allows a model to reason perfectly. In 2026, the industry has moved away from "trial-and-error" prompting toward Structured Prompt Engineering (SPE).

1. Structured Prompting: The "XML Sandwich"

In 2026, long-form, messy prompts are obsolete. Professionals use XML-based structures to create clear boundaries between instructions, context, and user data. This prevents "Instruction Injection" and keeps the model focused.

The Structure:

<system>: Defines the persona and hard constraints (e.g., "You are a Senior Data Scientist").<context>: Background information or retrieved data.<examples>: The "Few-Shot" section where you show, don't tell.<instruction>: The specific task to perform.

Why it matters: Models in 2026 are trained to recognize these tags as "metadata," allowing them to prioritize your instructions over the text they are processing.

2. Cognitive Architectures: Chain-of-Thought (CoT)

Models are much smarter in 2026, but they still "rush" to an answer if not guided. You must master the art of Reasoning Extraction.

Zero-Shot CoT: Using the modern version of "Think step by step." In 2026, this is done by prompting the model to output its internal logic inside

<thinking>tags before the final<answer>.Self-Consistency: A technique where you ask the model to generate three different reasoning paths and then "vote" on the most common answer. This is critical for math and logic tasks.

Prompt Chaining: Breaking a massive task into 5 small prompts. Prompt 1 analyzes the sentiment; Prompt 2 extracts keywords; Prompt 3 writes a draft based on those keywords.

3. Dynamic Few-Shot Learning

"Few-Shot" prompting (giving examples) is no longer static.

The 2026 Standard: Instead of hard-coding 3 examples into a prompt, you build a "Library of Examples." When a user asks a question, your code searches your library, finds the 3 most relevant examples, and injects them into the prompt dynamically.

Negative Constraint Prompting: Learning how to tell an AI what not to do effectively. In 2026, we use "guardrails" to ensure the AI never breaks brand voice or safety protocols.

4. Evaluation and Prompt Versioning

In Phase 2, a prompt is treated like a piece of software.

Prompt Git: Using tools to version-control your prompts. If you change a word and the AI's accuracy drops 5%, you need to be able to "roll back."

The Golden Dataset: You must learn to create a "Ground Truth" set of 50–100 questions and perfect answers. Every time you update your prompt, you run it against this set to ensure quality hasn't dipped.

Phase 2 Resource Toolkit

ResourceSkill FocusRecommended PlatformThe Prompt Engineering GuideIndustry standard techniquesPromptingGuide.aiDeepLearning.AI Short CoursesBuilding with LLM APIsDeepLearning.aiAnthropic’s Prompt LibraryModern XML examplesAnthropic DocsOpenAI CookbookAdvanced Python + PromptingGitHub/OpenAI-Cookbook

Phase 2 Mastery Checklist

Tagging: Can you write a prompt using XML tags that keeps a model from being distracted by "fake" instructions within a user's input?

Reasoning: Can you force a model to show its work in a

<thinking>block before giving a structured JSON output?Consistency: Can you build a "Few-Shot" prompt that makes the AI consistently output code in your specific company style?

Phase 3: Building with RAG (Retrieval-Augmented Generation)

This is where the magic happens. Companies don't want a generic AI; they want an AI that knows their business.

The Skill: Learn to use Vector Databases like Pinecone to give an AI "long-term memory" by connecting it to your PDFs, emails, or databases.

Phase 3 is where your AI gains "Long-Term Memory." In 2026, building a Retrieval-Augmented Generation (RAG) system is the industry standard for making AI accurate, up-to-date, and private.

While a standard AI only knows what it was trained on (its "cutoff date"), a RAG system looks up your specific documents before it speaks.

1. The RAG Architecture (The 5-Step Pipeline)

To build a production-grade RAG system, you must master these five interconnected steps:

Ingestion & Data Prep: You don't just "upload" a PDF. You must use Semantic Chunking to break documents into meaningful snippets.

Pro Tip: In 2026, use

RecursiveCharacterTextSplitterwith a 10–15% overlap to ensure context isn't severed between chunks.

Embedding Generation: Convert your text chunks into mathematical vectors.

Tool: Use models like OpenAI’s

text-embedding-3-largeor Cohere’sembed-v4for high-performance retrieval.

The Vector Database (The Brain): Store those vectors so the AI can search them by meaning, not just keywords.

Hybrid Retrieval: This is the "secret sauce" of 2026. A purely semantic search might miss a specific product SKU or legal ID. Hybrid Search combines vector similarity with traditional keyword search (BM25) to get the best of both worlds.

Generation: The AI takes the retrieved chunks and the user's query to generate a "grounded" answer with citations.

2. Advanced Optimization (From Demo to Production)

In 2026, a simple RAG isn't enough. You must implement these two high-level techniques:

Re-Ranking: Your vector search might return the top 10 chunks, but the actual answer is at #7. Use a Cross-Encoder Re-Ranker (like Cohere Rerank) to re-sort those 10 results for maximum precision.

Query Expansion (HyDE): If a user's question is vague, use the AI to generate a "hypothetical answer" first, then use that answer to search your database. This often finds better context than the raw query.

3. Evaluation (The RAGAS Framework)

The biggest mistake engineers make is shipping RAG without measuring it. In 2026, we use RAGAS (Retrieval Augmented Generation Assessment) to score four critical metrics:

Faithfulness: Is the answer factually supported by the retrieved context?

Answer Relevancy: Does the answer actually address the user's question?

Context Precision: Were the retrieved documents actually relevant?

Context Recall: Did the retriever find all the information needed to answer?

Phase 3 Resource Roadmap

GoalRecommended ToolBest ResourceOrchestrationLangChainLangChain RAG TutorialVector StoragePineconePinecone Learning CenterEvaluationRAGASEvaluating RAG with Ragas + LangSmithAdvanced TutorialDeepLearning.AIAdvanced RAG Techniques Course

Phase 3 Checklist:

Can you build a pipeline that cites its sources?

Do you have a "Golden Dataset" of 50 Q&A pairs to test your system?

Have you implemented a Re-Ranker to improve retrieval quality by at least 15%?

Ready to finish with Phase 4: Agentic AI (Building Systems that "Act" Autonomously)?

In 2026, RAG (Retrieval-Augmented Generation) is the industry standard for transforming a generic Learning Management System (LMS) into a Personalized AI Tutor. Instead of students searching through hours of video or hundreds of PDFs, the LMS "reads" the course material and answers questions instantly with citations.

The Architecture: How RAG works in an LMS

Ingestion: Course PDFs, Transcripts, and Quizzes are broken into chunks.

Vectorization: These chunks are turned into numbers (embeddings).

Storage: Vectors are stored in a database (e.g., Pinecone).

Retrieval: When a student asks "How do I solve X?", the system finds the most relevant course chunks.

Generation: The AI uses the chunks to write an answer, citing the specific lesson.

Implementation Guide: Building a RAG Tutor for an LMS

To follow this, you need a Python environment with langchain, openai, and pinecone-client installed.

Step 1: Data Ingestion & Semantic Chunking

We don't just cut text at random; we split it so thoughts stay together.

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_community.document_loaders import PyPDFLoader

# Load a course textbook or lecture notes

loader = PyPDFLoader("course_material.pdf")

data = loader.load()

# Split text into 1000-character chunks with 10% overlap

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=1000,

chunk_overlap=100,

add_start_index=True

)

chunks = text_splitter.split_documents(data)

print(f"Split course material into {len(chunks)} chunks.")

Step 2: Creating the Vector Brain

We convert these chunks into "embeddings" and store them in Pinecone.

import os

from langchain_openai import OpenAIEmbeddings

from langchain_pinecone import PineconeVectorStore

os.environ["OPENAI_API_KEY"] = "your_key"

os.environ["PINECONE_API_KEY"] = "your_key"

# 2026 Standard Model: text-embedding-3-small (Fast & Cheap)

embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

# Indexing the data into Pinecone

vector_db = PineconeVectorStore.from_documents(

chunks,

embeddings,

index_name="lms-tutor-index"

)

Step 3: The Retrieval and Generation Loop

Now, we build the "Tutor" logic that searches the material before answering.

from langchain_openai import ChatOpenAI

from langchain.chains import RetrievalQA

# Initialize the LLM (GPT-4o or similar)

llm = ChatOpenAI(model_name="gpt-4o", temperature=0)

# Create the Retrieval-QA Chain

tutor_chain = RetrievalQA.from_chain_type(

llm=llm,

chain_type="stuff",

retriever=vector_db.as_retriever(search_kwargs={"k": 3}) # Get top 3 chunks

)

# Student asks a question

question = "What are the three main laws of thermodynamics mentioned in Lesson 4?"

response = tutor_chain.invoke(question)

print(f"AI Tutor: {response['result']}")

Real Systems Using RAG Today (2026)

Coursera / Udacity: Use RAG to power "AI Coaches" that help students troubleshoot code snippets by retrieving relevant documentation from the course.

Khan Academy (Khanmigo): Uses a sophisticated RAG-style system to guide students through math problems without giving them the answer immediately.

Notion AI / Obsidian: These productivity tools use RAG to let you "chat with your notes."

Enterprise LMS (Cornerstone/Docebo): Large companies use RAG to let employees ask questions about internal compliance PDFs or technical manuals.

Phase 3 Mastery Resources for LMS RAG

Pinecone's RAG Guide: The definitive guide on vector database architecture.

LangChain's LMS Template: A pre-built blueprint for Q&A over documents.

DeepLearning.AI: Preprocessing Data for RAG: Learn how to clean messy PDFs for better AI accuracy.

Phase 3 Checklist for your LMS:

Does the AI provide the page number or source link in its answer?

Have you added a Re-ranker to ensure the most important course chunk is always first?

Is your system GDPR compliant (ensuring one student's data isn't retrieved for another)?

In 2026, NestJS has become the gold standard for building enterprise-grade AI backends due to its modularity and first-class support for TypeScript. Implementing RAG in NestJS requires a clean separation between your Ingestion Service (loading data) and your Inquiry Service (answering questions).

The Stack

Framework: NestJS (TypeScript)

Orchestration: LangChain.js (The industry standard for JS/TS AI)

Vector Database: Pinecone or Milvus

LLM: OpenAI GPT-4o or Claude 3.5

1. Core Service Implementation

First, install the necessary dependencies:npm install @langchain/openai @langchain/pinecone @pinecone-database/pinecone pdf-parse

The RAG Service (rag.service.ts)

This service handles both the "storing" of course material and the "retrieval" for students.

import { Injectable } from '@nestjs/common';

import { OpenAIEmbeddings, ChatOpenAI } from "@langchain/openai";

import { PineconeStore } from "@langchain/pinecone";

import { Pinecone as PineconeClient } from "@pinecone-database/pinecone";

import { RecursiveCharacterTextSplitter } from "langchain/text_splitter";

import { PDFLoader } from "@langchain/community/document_loaders/fs/pdf";

@Injectable()

export class RagService {

private vectorStore: PineconeStore;

constructor() {

const pinecone = new PineconeClient();

const index = pinecone.Index(process.env.PINECONE_INDEX);

// Initialize Vector Store with OpenAI Embeddings

this.vectorStore = new PineconeStore(new OpenAIEmbeddings(), {

pineconeIndex: index,

});

}

// Phase 1: Ingest Course Material

async ingestCoursePdf(filePath: string) {

const loader = new PDFLoader(filePath);

const rawDocs = await loader.load();

// Semantic Chunking

const splitter = new RecursiveCharacterTextSplitter({

chunkSize: 1000,

chunkOverlap: 200,

});

const docs = await splitter.splitDocuments(rawDocs);

// Upload to Vector DB

await this.vectorStore.addDocuments(docs);

return { status: 'success', chunks: docs.length };

}

// Phase 2: The Inquiry (Student Question)

async answerStudentQuestion(question: string) {

const model = new ChatOpenAI({ modelName: "gpt-4o", temperature: 0 });

// 1. Search for top 4 relevant context snippets

const relevantDocs = await this.vectorStore.similaritySearch(question, 4);

const context = relevantDocs.map(d => d.pageContent).join("\n\n");

// 2. Build the "Phase 2" Structured Prompt

const prompt = `

<system>You are a Master Tutor for the LMS. Answer using ONLY the context below.</system>

<context>${context}</context>

<question>${question}</question>

`;

const response = await model.invoke(prompt);

return {

answer: response.content,

sources: relevantDocs.map(d => d.metadata.source) // Return citations

};

}

}

2. The Controller (rag.controller.ts)

Expose the AI logic via a clean REST API.

import { Controller, Post, Body, UploadedFile, UseInterceptors } from '@nestjs/common';

import { FileInterceptor } from '@nestjs/platform-express';

import { RagService } from './rag.service';

@Controller('ai-tutor')

export class RagController {

constructor(private readonly ragService: RagService) {}

@Post('upload-lesson')

@UseInterceptors(FileInterceptor('file'))

async uploadLesson(@UploadedFile() file: Express.Multer.File) {

return this.ragService.ingestCoursePdf(file.path);

}

@Post('ask')

async askQuestion(@Body('question') question: string) {

return this.ragService.answerStudentQuestion(question);

}

}

3. Why NestJS is perfect for RAG in 2026

Dependency Injection: You can easily swap your Vector DB (e.g., from Pinecone to a local PGVector) by simply changing the provider in your module.

Middlewares & Guards: In an LMS, you don't want a student in "Math 101" accessing "Biology 202" data. NestJS Guards allow you to inject Metadata Filters into your similarity search so the AI only "sees" the student's specific enrolled courses.

Scalability: Using BullMQ with NestJS allows you to process massive PDF ingests in the background without blocking the main API thread.

Full Resources for Node/Nest AI

LangChain.js Documentation: The bible for JS-based AI.

Pinecone Node.js SDK: Specifics on managing vector indexes in JavaScript.

NestJS Official Recipes (Streaming): Essential for "streaming" AI responses (like ChatGPT) to the frontend in real-time.

Would you like a sample code for "Metadata Filtering" to ensure students only see their own course data?

To get a NestJS RAG system running in 2026, you need to connect three main pieces: The Intelligence (OpenAI), The Memory (Pinecone), and The Environment (Your .env file).

1. How to get your API Keys

OpenAI Key:

Go to openai.com.

Navigate to API Keys and click "Create new secret key."

How it works: This key tells OpenAI who to bill and allows your code to send text to their models (

gpt-4o) and embedding engines.

Pinecone Key:

Go to pinecone.io.

Create a project and go to the API Keys tab.

Create an Index with 1536 dimensions (the standard for OpenAI embeddings).

How it works: This key lets your application read/write to your "Vector Database" so you can store and search your course PDFs.

2. The Environment File (.env)

Create this file in your root folder. Never commit this to GitHub.

# AI Model Configuration

OPENAI_API_KEY=sk-proj-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

OPENAI_MODEL=gpt-4o

# Vector Database (Memory)

PINECONE_API_KEY=pcsk_xxxxxxxxxxxxxxxxxxxxxxxxxxxx

PINECONE_INDEX=lms-tutor-index

PINECONE_ENVIRONMENT=us-east-1-aws

# System Settings

PORT=3000

DATABASE_URL=postgres://user:pass@localhost:5432/lms_db

3. The Implementation Sample

In NestJS, we use the @nestjs/config package to safely read these keys.

The Configuration Module (app.module.ts)

import { Module } from '@nestjs/common';

import { ConfigModule } from '@nestjs/config';

import { RagService } from './rag.service';

@Module({

imports: [

ConfigModule.forRoot({ isGlobal: true }), // Makes .env available everywhere

],

providers: [RagService],

})

export class AppModule {}

The Secure RAG Service (rag.service.ts)

This shows how the system uses the keys to perform a search.

import { Injectable } from '@nestjs/common';

import { ConfigService } from '@nestjs/config';

import { OpenAIEmbeddings } from "@langchain/openai";

import { PineconeStore } from "@langchain/pinecone";

import { Pinecone as PineconeClient } from "@pinecone-database/pinecone";

@Injectable()

export class RagService {

private vectorStore: PineconeStore;

constructor(private configService: ConfigService) {

const pinecone = new PineconeClient({

apiKey: this.configService.get<string>('PINECONE_API_KEY'),

});

const index = pinecone.Index(this.configService.get<string>('PINECONE_INDEX'));

this.vectorStore = new PineconeStore(

new OpenAIEmbeddings({

apiKey: this.configService.get<string>('OPENAI_API_KEY'),

}),

{ pineconeIndex: index }

);

}

async search(query: string) {

// This uses the API keys to turn the query into a vector

// and find the matching math in Pinecone

return await this.vectorStore.similaritySearch(query, 3);

}

}

How the flow works (Step-by-Step)

Boot Up: NestJS loads the

.envfile into memory.Handshake: The

RagServiceuses thePINECONE_API_KEYto verify it has permission to talk to your database.The "Ask": When a user asks a question, the code uses the

OPENAI_API_KEYto send that question to OpenAI to be converted into a mathematical vector (a list of ~1,500 numbers).The Comparison: That vector is sent to Pinecone. Pinecone finds the "closest" vectors (the most relevant textbook chunks) and sends them back.

The Final Answer: Your code sends those chunks + the original question back to OpenAI to generate a human-friendly response.

Quick Resource Recap

Security: Use Secret Manager if you move to production (AWS/GCP).

Validation: Use

joiorclass-validatorwith your ConfigService to ensure the app doesn't even start if a key is missing.

Would you like to see how to "Filter" the search so a student only gets answers from the specific class they are currently viewing leave a comment?

Phase 4: Agentic AI—The Final Frontier

The "cool" job in 2026 isn't a prompt engineer; it’s an AI Agent Architect. This involves building systems where multiple AI "agents" talk to each other to solve a goal (e.g., one agent researches, one writes, one edits).

Phase 4 represents the frontier of AI in 2026: moving from "Chatbots" to "Colleagues." At this stage, you aren't just calling an API; you are designing an Autonomous Operating System.

The Core Philosophy: Agents as Systems

In 2026, an "Agent" is defined as a system that receives a goal (not just a prompt) and iteratively plans, acts, and observes until that goal is met.

1. The 4 Canonical Agentic Design Patterns

To build reliable agents, the industry has standardized on four specific architectural patterns:

Reflection (Self-Correction): The agent follows a Generate → Critique → Refine loop. Instead of accepting the first draft, the agent acts as its own peer-reviewer to catch hallucinations and logic errors.

Tool Use (Reasoning + Acting/ReAct): The agent has a "Swiss Army Knife" of APIs. It reasons about a problem, chooses a tool (e.g., Google Search, SQL, Calculator), observes the result, and repeats.

Planning: The agent breaks complex, multi-step goals into a dynamic to-do list. It uses "Chain-of-Thought" or "Metacognitive" prompts to determine the sequence of sub-tasks.

Multi-Agent Orchestration: Building a "Digital Team." You assign roles (e.g., a "Researcher" agent and a "Writer" agent) that collaborate and hand off tasks to one another.

2. Framework Comparison (The 2026 Landscape)

Choosing the right framework is the most important architectural decision you will make.

FrameworkDesign PhilosophyBest ForKey FeatureLangGraphState MachinesProduction-grade, high-reliability systemsTime Travel: Rewind and replay agent states to debug failures.CrewAIRole-Playing TeamRapid prototyping and business workflowsIntuitive Abstraction: Models agents like human employees (Manager, Worker).AutoGenConversationalResearch and exploratory collaborationMulti-Agent Debate: Agents negotiate with each other to solve problems.OpenAgentsInteroperabilityLarge-scale, persistent agent networksNative MCP + A2A: The first to support industry-wide cross-agent protocols.

3. Advanced Agentic Memory Systems

In Phase 4, memory is much more than a Vector Database. It is tiered:

Working Memory: The immediate conversation context (short-term).

Episodic Memory: Storing previous "trajectories" or experiences to avoid repeating past mistakes.

Procedural Memory: The skills and tool-use patterns the agent has "learned" over time.

Long-term Preferences: User-specific data stored in vector stores like Pinecone or Weaviate.

4. The "Safety First" Implementation Stack

Moving an agent to production in 2026 requires strict guardrails to prevent "infinite loops" or high costs:

Model Context Protocol (MCP): Use Anthropic’s MCP to standardize how your agents connect to enterprise data and tools safely.

Capability Gating: Never give an agent full system access. Use an allowlist of specific tools per environment (Dev/Prod).

Human-in-the-Loop (HITL): For irreversible actions like sending an email or charging a credit card, the architecture must pause and wait for human approval.

Observability: Use tools like LangSmith or Langfuse to trace exactly why an agent made a specific decision.

Phase 4 Summary Checklist

Autonomous Loop: Have you moved from a

while Trueloop to a structured framework like LangGraph?Failure Recovery: Does your agent have a "fallback" path if a tool call fails?

Cost Guardrails: Have you implemented a

max_iterationsortoken_budgetto prevent expensive runaway loops?

Would you like to see a "Multi-Agent Team" code example using CrewAI for your LMS tutor project?

Quick Resource Checklist

Best Intro Course: Andrew Ng’s Updated Machine Learning Specialization.

Best Practical Community: Kaggle for real-world datasets and competitions.

Best Sandbox: Hugging Face to play with open-source models for free.

Which part of AI interests you most—building the brains (the models) or the brawn (the agents that do the work)?

Comments (0)

Be the first to comment.

Keep reading

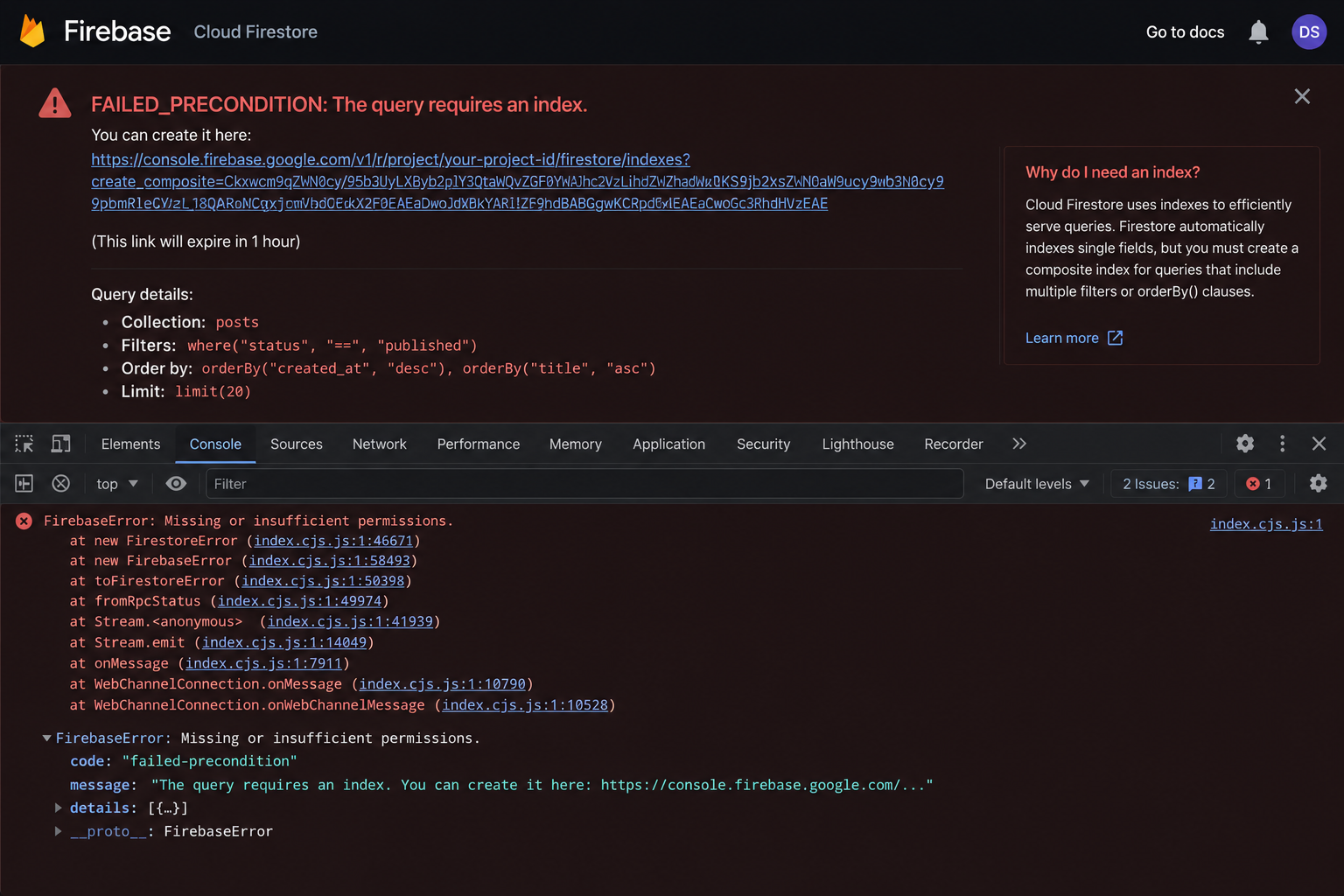

Why Your Firestore Query Silently Fails in Production

Your Firestore query works perfectly in development, then breaks silently in production. No visible error, just missing data. Ebesoh Adrian explains why multi-field queries require composite indices in NoSQL, how to diagnose them, and how denormalization can make the problem disappear entirely

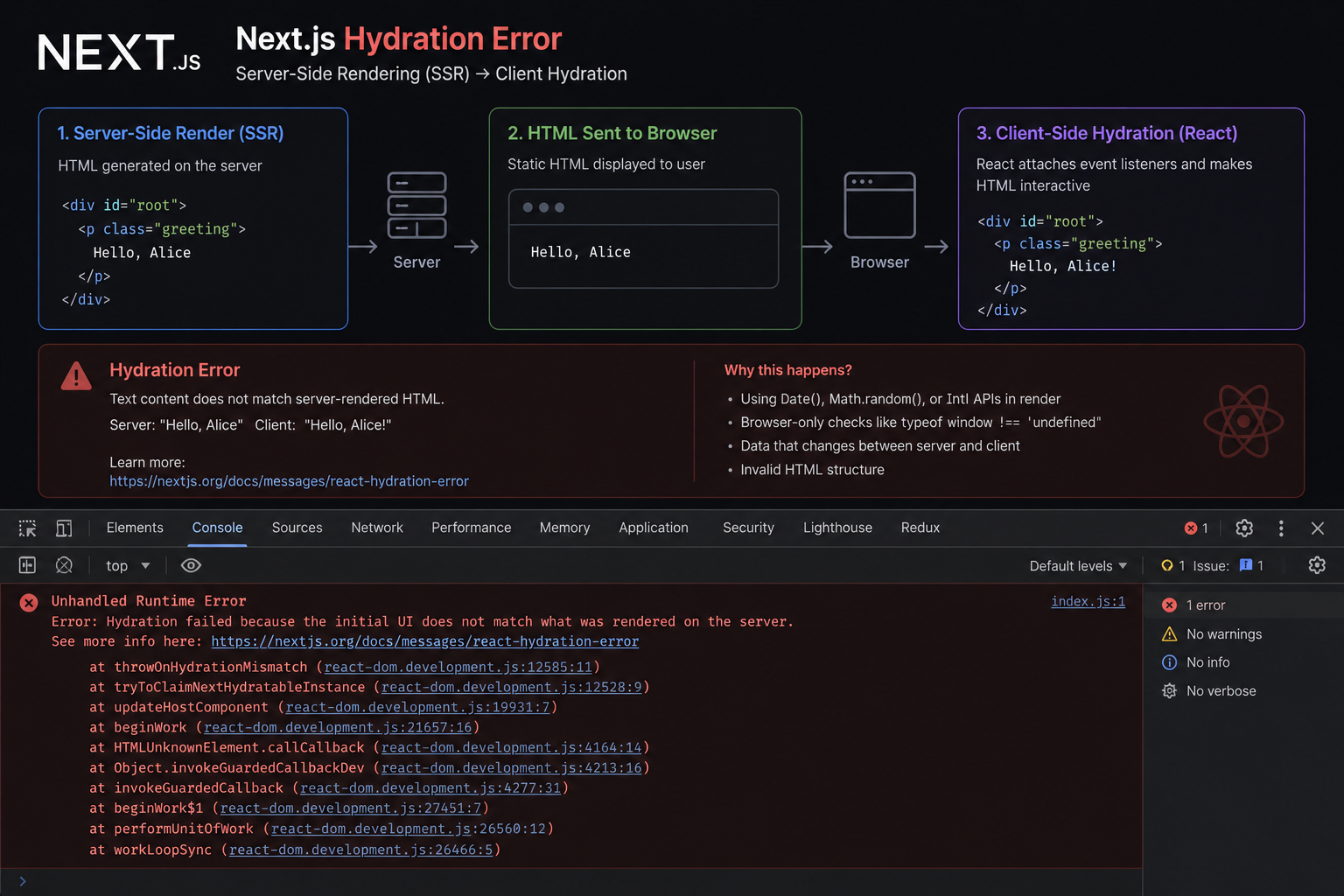

The SSR Error That Costs You the Entire Page

Text content did not match. Server: '8:00 AM' Client: '8:01 AM'." When React's hydration fails, it discards the entire server-rendered HTML and re-renders from scratch — destroying the performance benefit of SSR. Ebesoh Adrian explains why this happens and the architectural fix that prevents it permanently.

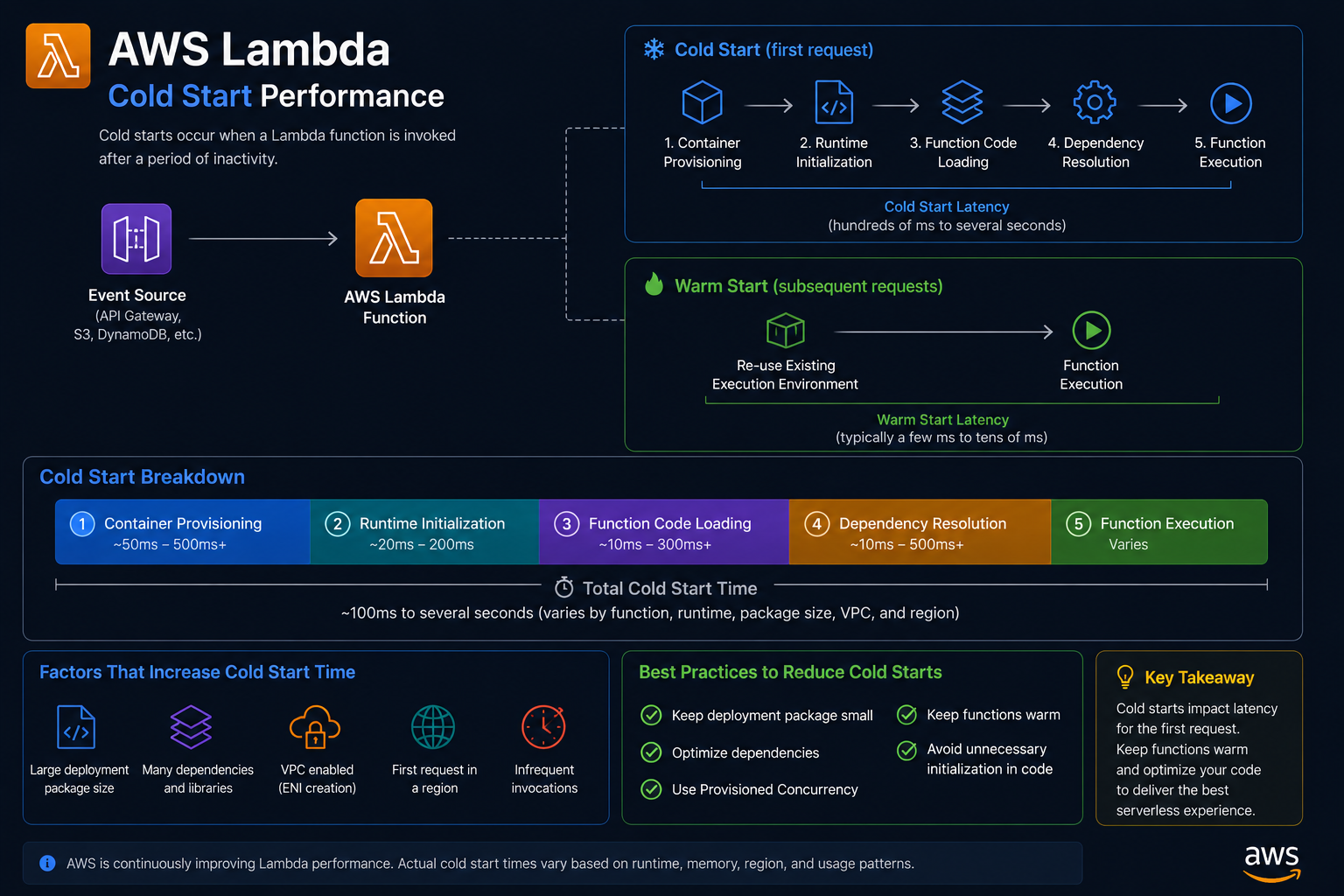

Why Your Lambda Takes 5 Seconds and How to Fix It

Your AWS Lambda function responds in 40ms when it is warm. But after a few minutes of inactivity, the first request takes over 5 seconds. Ebesoh Adrian breaks down why cold starts happen and the architectural strategies that eliminate the pain.